Quick answer: The citation economy refers to the emerging model of AI search visibility in which being named and cited inside an AI-generated answer — in Google AI Overviews, ChatGPT, Perplexity, or Gemini — delivers measurable traffic and authority that is increasingly independent of traditional ranking position. In this model, a page cited at position six can outperform a page ranking at position one if the cited page appears inside the AI answer that millions of users read instead of scrolling to the organic results.

For most of SEO’s history, visibility meant one thing: ranking position. The closer to position one, the more clicks. This model worked because searchers had to scroll through a list of results to find answers. The ranked page was the answer delivery mechanism — there was no alternative route to the information.

AI-generated answers change this structure fundamentally. When a query triggers a Google AI Overview, a Perplexity summary, or a ChatGPT response, the user receives a synthesised answer before they see any ranked result. Many users read that answer and stop. The pages cited inside that answer receive attribution — and often traffic — while pages ranked below the AI module may receive nothing at all, regardless of their position.

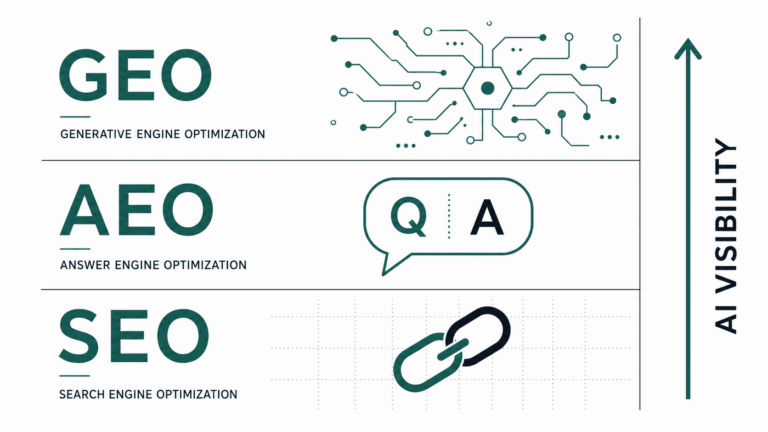

This is the citation economy: a parallel visibility layer where the currency is not rank but citation, and where the rules of earning that citation are different from the rules that govern rankings. This article covers what the citation economy is, which platforms it operates across, what kinds of content earn citations consistently, and how to measure and optimise for citation visibility. For context on the broader framework this fits within, see the complete SEO vs AEO vs GEO guide.

What Is the Citation Economy in AI Search?

The citation economy is a framework for understanding how value flows in AI-first search. In traditional search, value flows through ranked position: higher rank, more clicks, more revenue. In the citation economy, value flows through attribution: being named as a source inside an AI-generated answer that a user trusts and acts on.

The analogy to academic citation is deliberate. In academic publishing, being cited by other researchers is a signal of authority — it means your work is considered credible enough to reference. The citation builds reputation over time, independent of whether the citing paper ranks well in a search index. AI search citation works similarly: a site that earns consistent citations across ChatGPT, Perplexity, and Google AI Overviews builds an authority signal that compounds, creating a position where AI systems default to that source when assembling answers in its topic area.

The practical implication is that content strategy in 2026 has two distinct optimisation targets — ranking and citation — and the content structures that maximise each are not identical. Ranking optimisation favours depth, keyword coverage, and backlink authority. Citation optimisation favours information density, entity clarity, atomic answer blocks, and structured content that AI systems can decompose and recombine. The best content does both. Understanding the distinction is what separates practitioners who are capturing the full opportunity from those still optimising for only one layer.

Why Are AI Citations Becoming More Valuable Than Rankings for Some Queries?

The value of an AI citation versus a ranking position depends heavily on query type. Not all queries have shifted to AI-first delivery — and the shift is uneven across verticals, intent types, and platforms. Understanding where citations outperform rankings determines where to prioritise citation optimisation effort.

| Query Type | AI Citation Value | Ranking Value | Strategic priority |

|---|---|---|---|

| Informational definitions (“What is X?”) | Very high — AI answers dominate; many users never click through | Declining — position one often sits below the AI module | Optimise primarily for citation |

| How-to and process queries | High — AI Overviews summarise steps; cited sources get attribution | High — users wanting full detail still click through | Optimise for both simultaneously |

| Comparison and tool queries | Medium — AI summaries exist but users often want detail | High — BOFU intent drives clicks to full comparison posts | Prioritise ranking; add citation structure |

| Transactional / commercial | Low — AI systems less likely to summarise purchase decisions | Very high — commercial intent drives direct clicks | Focus on traditional ranking signals |

| Research and entity queries | Very high — AI models synthesise research heavily | Medium — saturated with authoritative sources | Optimise primarily for citation |

The queries where citation value is highest — definitions, how-to content, and research — are also the most common query types for content-led sites operating in the AEO Insider space: AI SEO, GEO, workflows, and automation. For this audience, the shift toward citation optimisation is not a future consideration — it is an immediate competitive priority.

The traffic mechanism also differs. Ranking traffic is click-based: users click a result to visit a page. Citation traffic is attribution-based: users see your brand name and domain cited inside an AI answer, may click the citation link, and develop brand familiarity even when they do not click. This second-order brand effect — becoming a named authority that AI systems consistently reference — is a compounding asset that rankings alone do not build.

Which AI Platforms Are Part of the Citation Economy in 2026?

The citation economy operates across five primary platforms in 2026, each with different citation behaviours, source selection patterns, and traffic referral volumes. Understanding how each platform cites content is the prerequisite for optimising toward them.

- Google AI Overviews. The highest-volume citation surface by a wide margin. AI Overviews appear on a significant portion of informational queries in Google Search and cite sources with linked attribution. Pages that rank in the top five for a query have the highest probability of appearing in the AI Overview for that query — but ranking is not the only determinant. Content structure, schema completeness, and topical authority all influence selection independently of position.

- Perplexity. A native AI answer engine that cites sources explicitly for every response. Perplexity retrieves pages in real time during answer generation, which means freshness and crawlability matter more here than on platforms using static training data. Sites with clean technical foundations and entity-rich content tend to appear consistently across repeated Perplexity queries in their topic area.

- ChatGPT (with Search). ChatGPT’s search-enabled mode retrieves and cites web content in real time for queries where current information is needed. Citation behaviour in ChatGPT Search is similar to Perplexity — frequency, recency, and structured content quality influence which sources are selected. The referral traffic from ChatGPT citations is currently small in absolute terms but highly engaged and growing.

- Microsoft Copilot. Powered by Bing’s index and GPT-4, Copilot cites sources across its answer modules in Edge, Windows, and the web. Bing Webmaster Tools data is the primary way to audit Copilot citation performance. Sites well-indexed in Bing tend to appear in Copilot answers for the same queries — making Bing indexing health a specific action item for citation economy participation.

- Gemini (Google). Google’s Gemini surfaces citations in its conversational interface and increasingly integrates with Google Search results. Citation selection in Gemini draws on the same indexing and authority signals as Google AI Overviews, making the optimisation approach directly transferable.

A practical priority order for most content-led SEO sites: optimise for Google AI Overviews first (highest volume), then Perplexity (highest citation explicitness and measurability), then ChatGPT Search (fastest growing). Copilot and Gemini optimisation is largely covered by the same structural work that improves Google AI Overview and Perplexity citations.

What Types of Content Get Cited Most Frequently by AI Models?

AI models do not cite randomly. They favour content that is structured for extraction — content that can be decomposed into clean, attributable information units without requiring the AI system to interpret ambiguous prose or reconstruct meaning from loosely organised paragraphs.

The content characteristics most consistently associated with high citation frequency are:

- Short, direct answer blocks. A 40–80 word definition or answer positioned near the top of a page — before any background context — is the single highest-citation-probability content pattern. AI systems extract these blocks cleanly because they are self-contained and attributable. Longer answers that require the AI to summarise rather than extract are cited less frequently.

- Explicit entity definitions. Content that defines key entities by name — stating what they are, how they relate to other entities, and what distinguishes them — is significantly more citable than content that assumes reader familiarity. AI models need named, defined entities to attribute citations with confidence. Implicit references (“the practice we’ve been discussing”) do not get cited.

- Structured lists and numbered steps. Ordered and unordered lists with clear, complete items are extracted more reliably than equivalent information embedded in prose. If a page explains a five-step process in paragraph form, the AI system must do interpretive work to extract the steps. If the same information is in a numbered list, extraction is direct and attribution is clean.

- Original statistics and data points. AI models prioritise sources with specific, verifiable data over sources that restate general claims. A page that reports “27% of informational queries now trigger a Google AI Overview” is more citable than a page that says “many queries now trigger AI Overviews.” Specific numbers, named studies, and original data collection are citation magnets.

- Question-based subheadings. Pages structured with subheadings phrased as questions — matching the conversational queries AI systems are responding to — are retrieved more reliably for those query types. A subheading of “What is Generative Engine Optimization?” maps directly to a user asking that question, creating a clean extraction match.

- FAQPage schema. Pages with FAQPage JSON-LD schema provide machine-readable question-answer pairs that AI systems can extract without interpreting HTML structure. This is the technical complement to question-based content structure — the content format and the schema format working together to maximise extractability.

These characteristics are not independent of each other. The most consistently cited pages in any topic area combine all of them: a direct answer block at the top, explicit entity definitions throughout, structured lists and steps where appropriate, original data, question-based subheadings, and FAQPage schema. Treating these as a checklist for every informational article — rather than as occasional refinements — is the citation economy content strategy in practice.

How Do You Measure Citation Visibility Across AI Platforms?

Measuring citation visibility is less mature than measuring ranking performance, but the data sources available in 2026 are sufficient to build a working citation audit practice. The measurement approach combines platform-native analytics, third-party tools, and manual audits.

| Platform | Measurement Method | Tool |

|---|---|---|

| Google AI Overviews | AI Overview impression data in Google Search Console (under Search results, filter by “AI Overviews”); third-party AI Overview tracking | Google Search Console, Semrush AI Overviews report, SE Ranking AI Overview tracker |

| Perplexity | Referral traffic from perplexity.ai in GA4; manual citation audits (query target keywords in Perplexity, note which pages are cited) | GA4 (source: perplexity.ai), manual audit |

| ChatGPT | Referral traffic from chatgpt.com in GA4; manual citation checks for key queries | GA4 (source: chatgpt.com), manual audit |

| Microsoft Copilot | Bing Webmaster Tools impression data; referral traffic from bing.com/chat | Bing Webmaster Tools, GA4 |

| Gemini | Referral traffic from gemini.google.com in GA4; Google Search Console AI Overview data covers related surfaces | GA4 (source: gemini.google.com), GSC |

Build a weekly citation audit into your reporting workflow using three data points: AI referral traffic in GA4 (sessions from all AI platform sources combined), AI Overview impressions for your target keywords in Google Search Console, and a manual audit of five to ten target queries in Perplexity and ChatGPT to confirm whether your pages are appearing as cited sources. This combination takes approximately 30 minutes per week and provides enough signal to identify which content is earning citations and which is not.

Specialist tools including Profound, Otterly.AI, and AthenaHQ offer more automated citation tracking across AI platforms — useful for sites targeting citation performance at scale or for agencies reporting AI visibility to clients. These tools are early-stage in 2026 but improving rapidly. For most practitioners, the GA4 plus GSC approach is sufficient to start.

How Do You Optimise Content to Win More AI Citations?

Citation optimisation is not a separate workflow from content production — it is a structural layer applied to every piece of content before publication. The six highest-leverage actions for increasing citation frequency are:

- Add a direct answer block to every informational article. Position a 40–80 word answer to the article’s primary question within the first 150 words of the post — before any background or context. Label it “Quick answer:” or format it with a left-border callout. This block should be complete enough to stand alone as a cited answer without the surrounding content.

- Rephrase every H2 as a question. Convert section headers from statement form (“The Benefits of GEO”) to question form (“What Are the Benefits of Generative Engine Optimization?”). Question-phrased H2s map directly to conversational queries, making it significantly easier for AI retrieval systems to match your content to the queries they are responding to.

- Declare entities explicitly at first use. Every key entity introduced in an article should be defined by name at first mention — even if the audience is assumed to know what it is. “Generative Engine Optimization (GEO) is the practice of structuring content so AI models can cite it inside AI-generated answers” is more citable than assuming the reader already knows. Entity declarations are the machine-readable anchors AI systems use when attributing citations.

- Add a FAQ section with at least five questions and implement FAQPage schema. The FAQ section is the highest-return addition to any informational article for citation purposes. Five or more complete Q&A pairs, each with a direct answer under 80 words, with FAQPage JSON-LD applied via Rank Math, gives AI systems multiple clean extraction points per page.

- Include two to three external citations per article. Linking to primary sources — official documentation, published research, named industry reports — signals to AI systems that your content is positioned within a broader information ecosystem rather than standing alone. Sites that cite authoritative sources are treated as more trustworthy citation candidates themselves.

- Audit and improve information density on existing high-traffic pages. AI citations are not earned only by new content. High-traffic pages that rank well but lack direct answer blocks, entity definitions, or FAQ sections are leaving citation value on the table. A structured content refresh — adding the citation elements above without rewriting the core content — can improve AI citation frequency within weeks of re-indexing.

These six actions apply regardless of whether you are publishing new content or refreshing existing pages. For a site building topical authority from scratch, applying all six consistently from post one is the fastest path to establishing a citation presence in an AI-first search environment. For an established site, the refresh audit — identifying existing high-traffic pages missing these elements and adding them systematically — delivers citation improvements with less content investment than creating new articles.

Frequently Asked Questions

The Bottom Line

The citation economy is not a prediction — it is already the operating environment for content-led sites in 2026. AI-generated answers are the first result millions of users see for informational queries. The sites cited inside those answers are accumulating brand authority, referral traffic, and topical credibility that compounds independently of their ranking positions.

The optimisation work is not technically complex. Answer blocks, question-based subheadings, entity definitions, FAQ sections with schema, and external citations — applied consistently across every informational article — are the structural decisions that determine whether a page participates in the citation economy or sits beneath it. The practitioners who understand this and act on it now are building citation authority while most of their competitors are still optimising exclusively for position one.

Next: see the full Generative Engine Optimization (GEO) guide for the complete content architecture framework that underpins citation optimisation — or go deeper on the technical foundations with the technical SEO guide for AI-first search.